German Chancellor Angela Merkel has attracted a lot of (mostly favourable) attention for criticising the recent removal of Donald Trump’s social media accounts.

As it is well known that she has strong political disagreements with the outgoing US President, her comments have been interpreted by some as a powerful argument for free speech.

Merkel coming to the defence of Trump might appear to be an example of someone following the maxim that “I disapprove of what you say, but I will defend to the death your right to say it.”

Now, I am an admirer of Chancellor Merkel for her political skills and record steering Germany through some very difficult times, but the record of her Government has certainly not been one of defending speech they disapprove of at home.

Donald’s German Alter Ego

Let us imagine for a moment that the Drumpf family had not emigrated to the US and that their rambunctious offspring, Donald, had tried to promote his brand of populist politics in Germany instead.

[NB Yes, I know the Drumpfs changed their name back in the 1600s so were firmly ‘Trump’ when they emigrated, but please allow me some poetic license in adopting this name for my imaginary German politician.]

Each day, Drumpf would stir the pot by posting a heady brew of attacks on journalists, news media organisations, politicians, companies, segments of society (generally not White), political leaders in other countries etc on social media

Many of the claims he makes are easily proved to be false, others are simply offensive, and a fair few could be interpreted as inciting violence.

And each day, his opponents would complain to the platforms about these attacks and…. in many cases, the platforms would quickly take them down (or at least make them invisible to anyone who lives in Germany).

In other words, Angela Merkel might not have to worry too much about German Donald Drumpf having a Twitter account because there would be significant controls over the content it could push into people’s feeds.

Now, this may seem odd to those of you who have diligently filed reports with various platforms about US Donald Trump’s posts and been disappointed to get replies to say that the posts don’t go against their standards so they are not willing to remove them.

In some cases, platforms have attached labels to President Trump’s content but they have rarely removed it altogether.

So why would this be different in Germany when platforms generally claim to have global standards?

Well, this is largely thanks to a law brought in by Angela Merkel’s own government with the intent of forcing platforms to remove more content.

This law was created in the run up to the last German Federal Election in 2017 with the support of politicians in the CDU-SPD coalition who saw it as helpful to counter the growth of far right populist individuals and organisations.

This law is called the Network Enforcement Act (also known as the Netzdurchsetzunggesetz, or ‘NetzDG’ to its friends) and is often talked about as an anti-hate speech law, but it is much more than that, it is an anti-Drumpf law.

That’s quite a bold claim to make so let’s tease this out some more.

Notice and Takedown On Steroids

In the EU, unlike in the US, the law does not give platforms full protection against liability for content that they carry.

Platforms are instead granted a limited protection that only lasts until they are made aware of the existence of some kind of illegal content – ‘ignorance is bliss’ in this model.

Once someone has told a platform that something is illegal they should, in theory, act quickly to remove it (if they agree it is in fact against the law) to avoid facing any penalties themselves.

In practice, it can be quite hard to pursue cases against platforms even where they have been put on notice but failed to act against allegedly illegal content.

In some cases, especially for defamation, someone is motivated enough to drag a platform into a court case and ensure they feel some pain if they did not stop the harm by removing the offending content promptly.

But in many other cases, for example with hate speech, the harm is more general and, without a specific victim, it is not clear who would take this to court and seek sanctions against the platform.

This was the concern that faced Germany politicians in 2015-16 during what came to be known as the ‘migrant crisis’ – they felt that platforms were not acting when notified about illegal content in Germany, and that this was fuelling social disorder and the rise of the populist far right.

We might describe the law they came up with as ‘notice and takedown on steroids’, as it sticks to the existing model where platforms only become liable when notified about illegal content, but dramatically raises the stakes for them if they fail to act on those notices.

The legal innovation it uses to do this is that significant penalties can be imposed on platforms if they don’t meet specific requirements for how they process notices of illegal content, including provisions on timeliness of action and reporting on their work.

What it does not do is change the underlying definitions of what constitutes illegal speech in Germany which remain as they ever were in the Criminal Code.

So, if you were thinking that the NetzDG is a law which makes online hate speech illegal, that would be inaccurate, it is rather a law that puts pressure on platforms to remove content that other provisions in German law already define as illegal (including but not limited to hate speech).

When we look at what those provisions are in German law that the platforms are now super motivated to enforce against, this is where the fun stuff starts for Donald Drumpf.

A Quick Tour of Illegal Speech

The Network Enforcement Act covers a range of different offences in the German Criminal Code, but let’s look at the ones that might be most relevant if a German were to emulate Donald Trump in their online speech.

There is of course a hate speech provision, which is positioned as a measure to prevent social conflict – ‘a disturbance of the public peace’.

Section 130 Incitement of masses (1) Whoever, in a manner which is suitable for causing a disturbance of the public peace, 1. incites hatred against a national, racial, religious group or a group defined by their ethnic origin, against sections of the population or individuals on account of their belonging to one of the aforementioned groups or sections of the population, or calls for violent or arbitrary measures against them or 2. violates the human dignity of others by insulting, maliciously maligning or defaming one of the aforementioned groups, sections of the population or individuals on account of their belonging to one of the aforementioned groups or sections of the population incurs a penalty of imprisonment for a term of between three months and five years.

So, if statements denigrate or threaten groups of people on the basis of their nationality then these are going to have to go.

And this law can be used by any group in society that is subject to hatred, not just your typical protected categories, so harsh language against political movements like BLM or ‘antifa’ may also cross the line here.

Next up, we have Insult, which may kick in when our irascible Donald Drumpf has a go at individuals rather than groups of people.

Section 185 Insult The penalty for insult is imprisonment for a term not exceeding one year or a fine and, if the insult is committed by means of an assault, imprisonment for a term not exceeding two years or a fine.

If a victim of Drumpf’s abuse wanted this taken down, they would go to the platform claiming that the insults were criminal under Section 185 and there is a good chance that this would lead to the removal of the content.

The plain text of the law does not help us much in defining exactly when an insult crosses the line to become criminal so platforms have to seek advice from lawyers who will look at case law and take a view on whether something is ‘likely illegal’ or ‘likely legal’.

Where the content is ‘likely illegal’, which is a fairly low bar from advice notes I have read over the years, then the platform knows that a failure to act will expose it to the threat of serious sanctions under NetzDG.

So, it seems likely that anyone railing around calling people they don’t like stupid and ugly, or worse, is not going to pass muster in Germany.

Next up, we have ‘malicious gossip’ which is intended to be a strong disincentive against making negative claims about people, unless you are really confident of your ground.

Section 186 Malicious gossip (üble Nachrede) Whoever asserts or disseminates a fact about another person which is suitable for degrading that person or negatively affecting public opinion about that person, unless this fact can be proved to be true, incurs a penalty of imprisonment for a term not exceeding one year or a fine and, if the offence was committed publicly or by disseminating material (section 11 (3)), a penalty of imprisonment for a term not exceeding two years or a fine.

Here, the law gives a basis for complaint to anyone who feels that someone is talking badly about them, for example claiming that that you are ‘corrupt’ or ‘incompetent’ in performance of your job.

You may have noticed the nice twist in this provision that it is on the speaker to back up their claims by proving what they have said is true.

So, if Drumpf posts something about you with the intent of damaging your standing, and you report this to the platform as malicious gossip, then the platform would have to ask Drumpf to back up the claim.

If Drumpf cannot or will not defend his claim about you with facts to support it then the platform is most likely to take that content down.

Finally, we can look at the ‘classic’ defamation provision which is available in German law as a criminal offence.

Section 187 Defamation Whoever, despite knowing better, asserts or disseminates an untrue fact about another person which is suitable for degrading that person or negatively affecting public opinion about that person or endangering said person’s creditworthiness incurs a penalty of imprisonment for a term not exceeding two years or a fine, and, if the act was committed publicly, in a meeting or by disseminating material (section 11 (3)), a penalty of imprisonment for a term not exceeding five years or a fine.

If we imagine that Drumpf takes a dislike to the people running German elections, and falsely claims they have rigged machines or makes up stories about their ownership when these are clearly contradicted by the publicly available facts, then he is going to be exposed under this law.

Of course, we have seen that US Trump’s team are also being sued for defamation by Dominion Voting Systems and the right to claim defamation is rather more universal than a particularly German thing.

But when you combine defamation law with NetzDG you do get something more powerful than when relying on defamation law alone.

If a German equivalent to Dominion Voting Systems filed NetzDG reports to platforms claiming defamation then there would be a strong incentive for them to remove this content without delay.

In the US, platforms have broad exemptions for liability even where the content proves to be defamatory so they have discretion over whether they want to remove it.

In a typical EU notice and takedown regime a platform is potentially liable once notified, but this risk only becomes material if the case comes to court and the defamation and the platform’s part in it are proven.

Under NetzDG, the risk of sanction is much more immediate and direct for a platform as the regulator can fine the platform for their handling of defamation claims, whether or not these later turn out to be well-founded.

This makes it unlikely that a platform would do anything other than swiftly remove claims that appear defamatory on their face, which would include many of the claims made by US Trump in relation to the election.

So, when we add all this together then we get to a place where Drumpf may not have much content left.

The platforms, operating under the terms of NetzDG, have removed attacks on groups in society, insults where the named individuals have complained, malicious claims about people where he can’t back these up with facts, and anything that appears defamatory.

Drumpf might be able to maintain his account and his feed is still there, but it is an anodyne beast that is not at all like that of his US counterpart.

Alternative Codes

At this point, you may be horrified by the extent to which German law would fillet the content produced by someone like President Trump, or delighted by what I have described and thinking ‘where can I get me one of them NetzDGs?’

Whatever your position on these specific restrictions, you may still consider that Angela Merkel is standing up for an important principle – that governments should decide the limits on speech not private companies.

And this is what I will explore in the last section of this post – the relationship between platform speech standards and those set by governments.

Platforms will be successful when they meet the expectations of their user communities and this goal will be reflected in the content standards they implement.

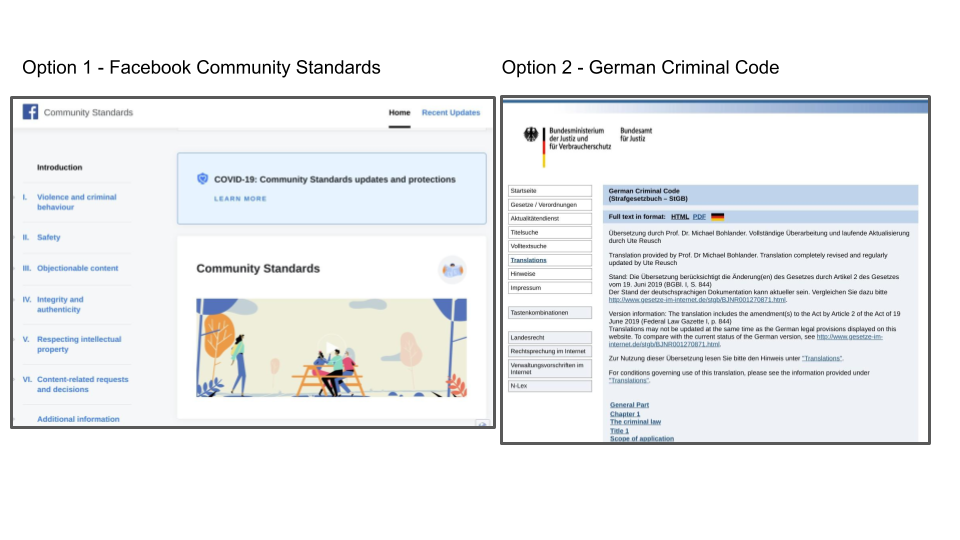

In some countries, there is a genuine choice – between local standards that politicians have debated and set down in law, and global standards that platforms have created and linked to their terms of service.

Germany is just such a country that has extensively debated and agreed its own definitions of what speech is permitted and prohibited, and you could imagine a German platform feeling comfortable about adopting the ‘German legal standard’ rather than developing its own rules.

Applying this standard would be likely still to support a safe and orderly environment on the platform, as, if anything, it may be more restrictive than the standards a commercial entity would cook up for itself.

[NB We should note that translating legal standards into operable rules would be a significant challenge as case law is unlikely to cover all the scenarios faced by a platform and can be unclear or contradictory.]

A similar choice will be on offer in other countries that have well-established speech laws but levels of comfort about deferring to these local legal standards may vary quite widely depending on the regime making the laws.

It may be tempting to try and roll this principle over to the US, and argue that platforms should apply the ‘American legal standard’ which is assumed to be a rule favouring nearly unlimited free speech as defined in the First Amendment.

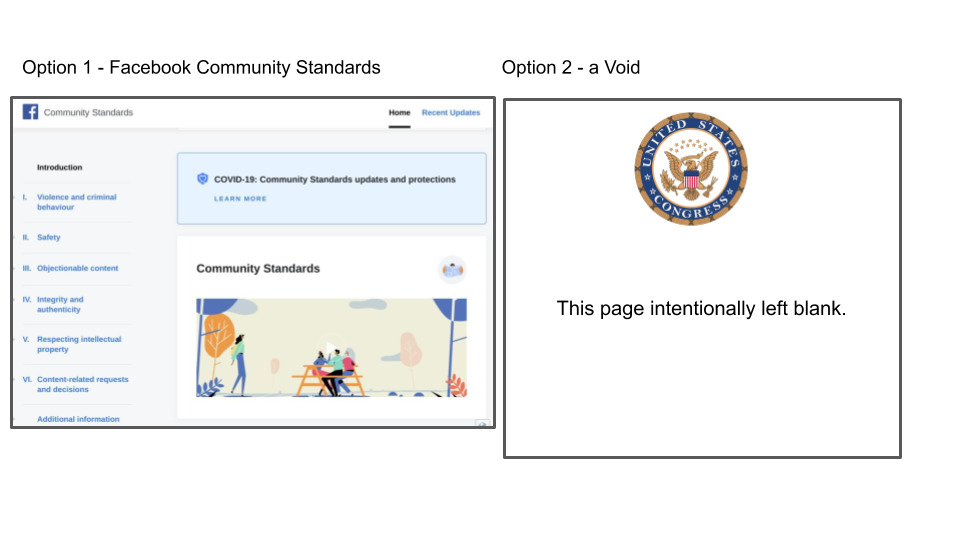

But this would be to apply a false equivalence – while other countries have created a rivalrous code in their laws restricting speech, the US has expressly forbidden its legislators from creating any such code.

In countries such as Germany, the debate between government and platforms centres on ‘my code or yours’, while in the US it is between platform codes and a (deliberate) void – there is no equivalent ‘my code’ in US law.

Those who drafted the First Amendment feared what Congress might do if it had the power to regulate speech and so deprived legislators of this capability precisely so that the US would not end up with laws like the ones adopted by Germany and many other countries.

What the First Amendment does not seek to do is prohibit others, who do not have the unique powers of Congress, from imposing their own restrictions as long as these do not have the force of law.

If we look at one of the other areas covered by the First Amendment, freedom of worship, we see that there is an intent to ensure that people can follow any religion not just a set of orthodox religions defined by Congress.

This has created the space for new faiths like Mormonism to grow in the US without the fear that more established churches like Episcopalians and Catholics might lobby Congress to have them outlawed.

But what the First Amendment does not do is insist that established churches adopt Mormon beliefs or give a right to Mormon preachers to speak in their places of worship.

As we consider speech on the internet, the goal of the First Amendment is not realised by having all platforms permit all speech, but by ensuring that platforms that take an unorthodox approach to speech are not shut down.

Success is that there should be a space for platforms like Gab and Parler, if Americans want these to exist, rather than that Facebook and Twitter are prohibited from creating and enforcing their own rules.

Some US Rules

Given that the US is fiercely opposed to legislators setting speech rules, and prefers for this power to rest with individuals and organisations, it is worth looking beyond the platforms to see how other public spaces are managed.

In my last post, I drew an analogy between football clubs and online platforms as both being private managers of public spaces, and in this vein it is interesting to look at the rules governing US sports venues.

We see that Major League Baseball, the National Football League, the National Basketball Association and Major League Soccer all have rules governing acceptable speech in their venues – there are no ‘anything goes’ First Amendment rights when the public is in these spaces.

The Major League Soccer code includes language that is very similar to the restrictions we find in platform standards :-

The following conduct is prohibited in the Stadium and all parking lots, facilities and areas controlled by the Club or MLS:

Displaying signs, symbols, images, using language or making gestures that are threatening, abusive, or discriminatory, including on the basis of race, ethnicity, national origin, religion, gender, gender identity, ability, and/or sexual orientation

They have their own version of a political advertising ban :-

Displaying signs, symbols or images for commercial purposes or for electioneering, campaigning or advocating for or against any candidate, political party, legislative issue, or government action

And the most serious sanction for breaching the code is ejection from match venues potentially on a permanent basis :-

Any fan who violates any of these provisions may be subject to penalty, including, but not limited to, ejection without refund, loss of ticket privileges for future games and revocation of season tickets. Any fan who (1) conducts themselves in an extremely disruptive or dangerous manner (“Level 1 Offenses”), or (2) commits multiple violations during a 12-month period, may be banned from attending future MLS matches for up to a year or longer. Level 1 Offenses include, but are not limited to, violent behavior, threatening, abusive or discriminatory conduct or language, trespassing or throwing objects onto the field, use or possession of unauthorized pyrotechnics or other dangerous Prohibited Items, or illegal conduct.

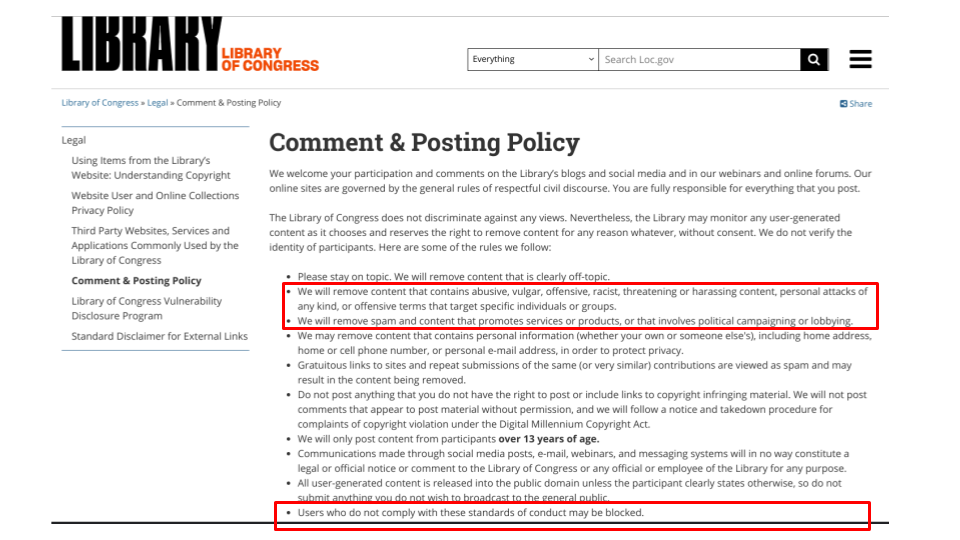

Perhaps even more appositely for politicians, we can look at the standards that the Library Of Congress has created for people who want to comment on their website.

Again, we find restrictions on hate speech and insults with the penalty being that you may be ‘ejected’, ie blocked from making further comments, and we can find similar provisions in the terms of countless US websites.

The picture this paints is of an orthodox position where private owners of publicly-accessible spaces in the US will routinely prohibit hate speech and insults using language that is closer to that in German law than it is to anything in US law.

The penalty for breaching these speech rules is not criminal but one of exclusion from the spaces controlled by an organisation.

The fact that the First Amendment exists means that, while this may be an orthodoxy, it is not mandated, and that Congress is not able to prohibit others from following a more heterodox approach where they permit speech that the mainstream does not allow.

And this context provides a different lens through which to consider the question of how major platforms are treating Donald Trump and others on the populist right.

We should not see platforms as violating an assumed ‘unlimited speech’ orthodoxy but rather as being really quite mainstream in terms of US norms.

The question then becomes one of whether some or all platforms should be required to accept the unorthodox position of permitting speech that would not be allowed in a public sports stadium or on other websites, and, if so, what is the rationale for forcing them to depart from the norm?

The fact that platforms may be big does not stand up on its own as a reason for imposing special requirements on First Amendment grounds – some religions and broadcasters are giants in their respective fields but remain self-governing.

And while there may be a viable theory for substituting the government’s judgement for that of the platforms in other countries, to do so in the US would itself seem to go against the intent of the First Amendment.

Whatever the outcome of this rather theoretical debate, on a more practical note I wish any of my readers who are in the US, whoever they voted for, a smooth transition of power this week and successful new administration.

Different philosophy between free speach in the US and European countries I was not aware of. Looking at the issue of Twitter/Face book through this prism, doesn’t make them unusual in their US behaviour.

However, how does this view change, if at all, in light of the reach and monopolistic nature of these platforms? If for example I don’t like Facebook’s tollerance of Trump, but I am unwilling to patronise the ultra-right wing ones, I can’t really take ‘my business’ elsewehre, as there is no mainstream alternative to Facebook.

This is a good question to ask about whether people have alternatives, but I wonder if we may sometimes be too quick to feel we have to leave platforms because of decisions they have taken to exclude or include someone we like or dislike.

The threat of leaving may feel like a good way to ‘punish’ a platform for a decision you don’t like, but this starts to become impossible if there are people walking away whichever decision you make, ie group A will leave if you ban someone but group B will leave if you allow them to stay on.

We can believe platform rules are wrong but still be willing to respect them as a condition of use, just as we know we have to abide by rules that govern other spaces whether or not we like them.

I really enjoyed this post, thank you! Liked the analogy with sports clubs/venues, I had never thought of that 🙂

Thanks! Great reading — especially when one sees NetzDG-like bills popping up across the world, including (especially?) in less democratic countries. Just had the impression that Merkel was really troubled with the ban/suspension, not sure the removal of Trump’s posts in connection with the Capitol riot would be deemed problematic…

Thanks for this interesting article!

I’ll say I don’t find the analogy with sports clubs to be particularly compelling. Sports clubs are in the business of putting on sporting events. They have rules that restrict behavior that might interfere with that particular goal. So politics, hate speech, etc. are all reasonably banned because there is no place for them in the furtherment of their goals. They also restrict videotaping the games, bringing in your own food, etc. etc. This is all mentioned to point out that sports stadiums are NOT the same as the town square, and any analogy equating the two will invariably fall short.

Things also don’t miraculously change because they’re online either. To further your sports analogy, someone could open up a Manchester United fan club forum, and ban a rabid Liverpool fan who kept up an endless litany of “ManU sucks, Liverpool rules” posts. They’re in the business of providing a meeting place for ManU fans, so kicking out a Liverpool fan is completely reasonable.

Twitter is different. It’s in the business of conversation on a mass scale. For better or worse, it has become the de-facto modern “town square”. Because of this, should it not be held to a higher standard? And the fact that it’s “not a monopoly” isn’t particularly relevant when all the alternative platforms are either enacting similar policies, or are themselves pushed out of existence. Now Donald Trump is not suffering a stifling of speech. He has a plethora of avenues for getting his speech out there. I shed no tear for him. But there are lesser known or heard from voices that are suffering the same fate, and it’s those voices that I stand for. The first amendment doesn’t apply to Twitter, of course, but that doesn’t mean we can’t accuse them of censorship or decry rather than applaud their actions.

And let’s not forget that the Red Scare from 60 years ago was essentially a cabal of private organizations plotting together to restrict speech they considered “dangerous” at the time. I had thought that we considered the Red Scare as a teaching moment for the sad excesses of censorship rather than as playbook for the 21st century.

The world is different than it was 20 years ago and certainly different than it was 250 years ago. The agitator on a soap box in the town square became so sacrosanct that we enshrined his actions in the very first amendment to our constitution. How does it work when the soap box and town square are both owned by private companies? Do we care?

Thanks for this thoughtful response.

No analogy is perfect but I do think the sports ground one holds up in that the primary purpose of the rules is to keep things orderly in a public space where crowds gather, and I think this is what platform standards are also trying to do. There may be important differences in what is permitted in various environments, but the common thread is that there are rules of behaviour rather than saying ‘anything goes’ in large social spaces.

For example, a racist can use all kinds of racial slurs in their own home, and in the US they could do so in the street without being prosecuted. But when they go to a sports ground but they would be expected to refrain from doing this, and I think it is reasonable for platforms similarly to expect restraint from their users. The reverse situation, where the managers of a social space would be forced to allow racial abuse even where this causes real problems to other guests seems unsustainable.

Having said all that, I have a lot of sympathy with your point about the risk to lesser known and more marginalised voices. The actions of large platforms can be even more impactful for individuals who do not have the profile of a senior politician. It is important that platforms explain their rules clearly and enforce them fairly so people know where they stand. And I see an important role for regulators in overseeing the way platforms create standards and implement them precisely so that individuals have someone powerful on their side to keep a check on services.

Lastly, on the public square question, I would suggest that ‘the internet’ is the public square rather than any particular service that runs over it. The power of the platforms that are big today is of course material, but the biggest threat to the spirit of the first amendment would be anything that limits people’s ability to create their own spaces with their own rules if they disagree with the ‘established’ platforms.

Very interesting and clear analysis – and as a German I do prefer a Free Speech position over the German “Mind Control” which has already led to Nazi Germany in other times.

One question I have, though, is: why do you name communication channels and meet markets “platforms”? Would you consider the New York Times “Letter to the Editor” section a platform, or pubs, public parks, etc.? Precise Speech – from my point of view – is at least as important as Free Speech.

The term “platform” denominates infrastructure that sets standards for services delivered there. Typical platforms are computer operating systems, power grids, or train stations. As soon as you name FB a “platform” you risk assigning the right to set standards as to the content delivered there.

Thanks, Peter, you make a good point on language and you are right to challenge me!

I tend to use the term ‘platforms’ as the debate has become one about ‘platform regulation’ but this can cover a multitude of sins. I sometimes talk about ‘social media services’ especially when contrasting them with entities like the New York Times that I would describe as ‘classic media’.

The term ‘platform’ becomes a useful shorthand when we want to talk about issues like community standards as a variety of different services, eg search and online marketplaces, as well as social media all have these. But the issues may be quite different for people at the more infrastructural end of the spectrum, eg cloud hosting services, from those which manage user-generated content.

I will think about this some more as I can see that I may be assuming everyone has the same understanding of what is meant when we throw around the word ‘platform’ when we may actually have quite different things in mind. Thanks again for picking it up.